Learning to Feel With Light

By Fink Densford

BU News Service

It can be a little hard to focus when you’re sharing a room with a robot that’s taller than you. Especially when it has enormous red arms, awkwardly jutting from a thin frame. Each thick arm on Baxter, the robot I’ve been sitting next to for half an hour, is tipped with a two-pronged plastic claw. The claw is an advanced version of the handheld kind used to pick up litter or pinch unsuspecting siblings.

The room I’m sharing with the Baxter is a large glass cubicle in the Stata Center at the Massachusetts Institute of Technology. Outside the windowed cage are scattered desks, each furnished with stacks of paperwork, food wrappers, cables and computer equipment. But the most interesting thing is at the end of Baxter’s hand, currently gripping an egg.

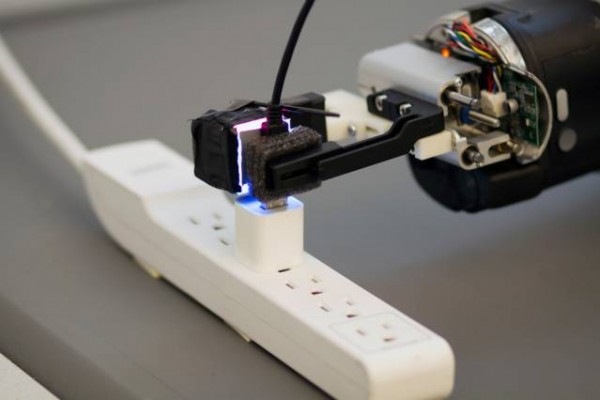

What I had come to see was perched on the tip of the robot’s claw, gripping at the egg. Pressed up against one side was a small, taped up black box about the size of an iPhone power plug. The little black box does something special with a tacky-textured pad on one side– it “feels” with light.

In the room with Baxter and me is Rui Li, a PhD student at MIT and lead developer on the “GelSight” program, which aims to give robots a sense of touch through the use of cameras and lights.

Li slipped the egg from Baxter’s grip. A moment later, he placed a small empty jar – the kind in which you’d normally find maraschino cherries or olives – in the claw of the robot. Then he began to fill it with water.

Nothing noticeable happened, but that was the point. Normally, this would be a very advanced task for a robot – tactile sensing is one of the most difficult tasks robots can do – and the robot would drop the bottle as it gained weight. Most robots are programmed to operate around tactile sensors – finely tuned to perform tasks with no need to feel. Computer monitors in front of Li, being used to control and program the robot and it’s sensors, lit up with numbers showing that the claw was automatically adjusting its pressure as the jar got heavier, keeping it from slipping out of its grasp.

“Push your finger against it,” offered Li, gesturing to the small pad, the sensor in question. On the screen of his laptop, a green and black grid showed an image of anything pushed against the small sensor in Baxter’s claw. It did so by reflecting the light from four LEDs, positioned on each side of the gel pad, off the painted metallic surface. When something pressed against the gel pad, the other side was deformed in the same way. A camera behind the pad constantly fed the image to a computer, which created a 3D image of the object it was pressed against.

By creating a microscopically detailed 3D image of what it is holding, the GelSight sensor doesn’t have to rely on pressure readings or any other complex interpretations. It can literally see it, how it’s moving and apply enough pressure to hold on tight.

In this case, the screen showed my fingerprint, its complex canyons and ridges displayed in bright detail, in 3D. Li also showed me more complex images that the sensor was able to pick up, including two human hairs that crisscrossed, with enough detail to tell which was on the bottom. Li seemed especially proud of the clear image of a ”1” from a hundred dollar bill.

The device, Li explained, provided anywhere from a 100 by 100 pixel image to a 600 by 800 pixel image of the surface. In doing so, the sensor was able to provide a level of responsive detail that started in the thousands in terms of actively monitored points on the sensor. The closest non-visual tactile sensor that is commercially available, says Li, provides around 19.

Li has previously shown that the sensor, when attached to a robot, can allow it to pick up a free-hanging USB cord and plug it in – a task that comes with about a single millimeter of leeway between success and failure. Baxter, it seems, is about as sensitive as robots come.

And the sensor is able to hold more than just USB cords, eggs and jars. Li said that since the sensor produces high-resolution 3D geometry of the surfaces it touches, it could be used for any object – even a potato chip can be held between the plastic claws without cracking.

Robots often struggle to find ways to feel like humans, and the sensor is effectively giving robots as a whole a new sense to take advantage of. The cost of the unit was a mere fraction of its competitors, said Li, and is quickly moving towards commercial availability.

So while Baxter may be a menacing looking piece of machinery, it’s the tip of his finger that holds the most potential. And with Li behind the device, it’s impressive list of abilities is bound to keep growing.

Leave a Reply